Your content team is already using AI in healthcare CMS. The question is whether your CMS is aware of it.

According to a McKinsey survey of healthcare leaders, 85% are exploring or have already adopted generative AI capabilities. Yet in the same research, 91% said they do not feel "very prepared" to deploy it responsibly. That gap between adoption enthusiasm and operational readiness is not abstract. It is the space where compliance exposure lives: AI-generated content that bypasses editorial approval chains, skips accessibility validation, and reaches patient-facing pages with no audit trail documenting who reviewed it or when.

For Heads of Digital and Digital Experience Managers in healthcare, the risk is not AI itself. It is AI that operates outside governed workflows.

The Bolt-on AI Problem

Most healthcare content teams have adopted AI through browser extensions, standalone writing assistants, or copy-paste from external generators. The output enters the CMS as finished text, indistinguishable from manually authored content but missing every governance checkpoint the organization spent years building.

The downstream consequences are specific and regulatory. Ungoverned AI pipelines are vulnerable to prompt injection, data exploitation, and the quiet exposure of protected health information. In the AMA's 2026 physician survey, 86% of doctors identified data privacy as critical for broader AI adoption, and 70% expressed concern about AI-driven skill degradation in clinical training. Separately, 72% of healthcare executives now rank data privacy as the top risk in AI adoption. When AI-generated content appears on a hospital website without documented human review, it reinforces exactly the governance vacuum these stakeholders are warning about.

PHI Exposure Ungoverned AI pipelines can leak protected health information into prompts and outputs.

Prompt Injection External AI tools are vulnerable to manipulation that bypasses intended guardrails.

Polished but Wrong AI output appears structurally sound while containing subtle clinical errors or bias.

Then there is what researchers describe as the "polished output" problem. Generative AI produces content that appears structurally sound on first read but may contain subtle bias, omitted clinical nuance, or outdated references to the standard of care. The Nielsen Norman Group's research on AI-assisted content creation confirms this pattern: AI can generate outputs that look polished at first glance while still containing issues that compromise quality. In healthcare, where a single content error on a patient education page can trigger regulatory scrutiny, a polished appearance without governed review is a liability, not a productivity gain.

What Governed AI in Healthcare CMS Looks Like

The alternative to bolt-on AI is not slower content operations. It is AI that operates within the same governance architecture your team already trusts. Three capabilities distinguish governed AI from ungoverned tooling.

AI-assisted editorial drafting within approval workflows

When AI helps draft or revise content, that output should enter the same tiered workflow as any manually authored page: draft, review, publish, archive. Every AI-generated revision routes through role-based permissions and requires human sign-off before it reaches a live environment. The AI accelerates creation. The workflow governs what gets published.

Pre-publish accessibility validation

Healthcare digital properties carry legal accessibility obligations under the ADA, Section 508, and WCAG 2.2. AI-powered accessibility checks that run before publish, catching contrast failures, missing alt text, or keyboard navigation gaps, convert a post-launch audit liability into a pre-launch quality gate. The difference is whether you discover accessibility violations from a complaint or from your own system.

AI-powered clinical content classification

Healthcare content spans service lines, conditions, treatment pathways, and patient populations. AI taxonomy tools can classify and tag content consistently across thousands of pages, but only when every classification decision is logged and auditable. Governed metadata routing assigns clear ownership, enforces data minimization, and ensures clinical tagging remains human-reviewable at every stage.

The connective principle across all three: AI augments speed at each stage of content operations, but never bypasses the governance layer. Every AI action is logged. Every output requires human approval. The HHS AI Strategy reinforces this position at the federal level, calling for transparency, accountability, and risk management as foundational requirements for AI deployment across health and human services.

How Varbase Governs AI Across the Editorial Workflow

Varbase is built on Drupal's content governance architecture, and its AI capabilities operate inside that architecture rather than alongside it. The Varbase AI Editor Assistant adds AI-powered drafting directly inside CKEditor 5, where every AI-generated revision enters the same Draft → In Review → Published → Archived workflow as manually authored content. The Varbase AI Image Alt recipe automatically generates alt text for image fields, feeding AI output into editorial review before publication. The Varbase AI Taxonomy Tagging recipe classifies and tags content based on body field analysis, with every taxonomy assignment logged and human-reviewable.

Out-of-the-box editorial workflows enforce tiered moderation with role-based permissions. The Admin Audit Trail module logs every workflow event. The Moderation Sidebar provides frictionless human-in-the-loop sign-off via an off-canvas content moderation menu. Scheduled publishing rules through the Scheduler Content Moderation Integration module reduce manual deployment errors.

These are not AI features bolted onto a CMS. They are governance requirements that AI must meet.

Evaluating AI-in-CMS for Healthcare

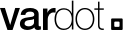

If the answer to any of these is no, the AI is faster but not governed. In healthcare, that distinction carries regulatory weight.